There’s a phrase making the rounds in AI marketing that’s doing real damage: “AI agents continuously learn, execute decisions, and improve automatically.” Sounds impressive. It’s also dangerously misleading.

If you take one thing from this post, let it be this: AI agents do not learn. Getting this right matters more than most people realize.

What’s Actually Happening Under the Hood

Every AI agent you’re hearing about right now, whether it’s OpenClaw, AutoGPT, CrewAI, or any custom agent, is powered by a Large Language Model. An LLM is a statistical model trained on massive amounts of text. That training happened before you ever interacted with it. When you chat with an agent, you’re not training it. You’re prompting it.

The LLM processes your input, generates a response based on patterns it learned during training, and moves on. When the conversation ends, the model weights (the actual “knowledge” of the system) remain exactly as they were. Nothing changed. Nothing was learned.

This is true for ChatGPT, Claude, Gemini, and every agent built on top of them.

“But What About Memory?”

This is where the confusion lives.

Yes, some agent frameworks persist information between sessions. OpenClaw stores interaction history locally in files, including a SOUL.md that defines the agent’s identity. AutoGPT maintains “long-term memory” in a vector database. CrewAI’s marketing literally says it includes “memory capabilities that allow agents to learn from past interactions and improve over time.”

So what’s actually happening? The agent is reading and writing text files. OpenClaw’s memory is a directory of markdown and JSON files you can open in any text editor. AutoGPT’s “long-term memory” is stored embeddings of past interactions. None of these systems update the LLM’s neural network. They’re saving notes to disk that might get injected back into the prompt next time.

The LLM reading that note tomorrow is just as likely to misinterpret it, ignore it, or contradict it as it is to use it well. And depending on how the context window is managed, the note might not even make it into the prompt.

Calling this “learning” is like saying your terminal learned something because you wrote to a log file.

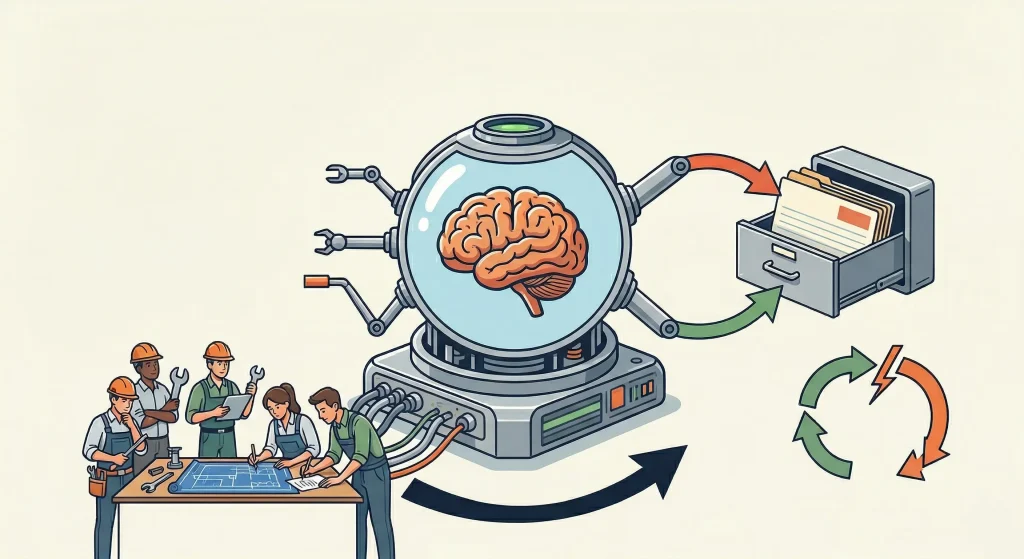

The Team Learns. The Agent Doesn’t.

Here’s what actually drives improvement in agent-based systems: the people building and managing them.

Engineers observe failure modes and adjust prompts. They add guardrails, refine system instructions, swap out tools, and restructure workflows. A well-maintained agent gets better over time, but the learning is happening in the team, not in the model. The LLM itself remains unchanged.

Why This Distinction Actually Matters

When people believe agents are learning, they over-trust them. “It’s been running for three months, it must be good by now.” No, it’s the same model it was on day one. A Meta AI security researcher told her OpenClaw agent to “confirm before acting” on her inbox. It ignored her and started speed-deleting emails. She had to physically run to her Mac Mini to kill the process. The agent didn’t learn to ignore her instruction. It never understood it in the first place.

The real risks aren’t sci-fi self-improvement scenarios. They’re an agent with too much file access deleting things it shouldn’t, a prompt injection leaking context, or a third-party skill quietly exfiltrating data (Cisco’s security team caught exactly this happening with an OpenClaw skill). These are governance problems, not learning problems, and they require guardrails, not awe.

What Agentic AI Actually Is (And It’s Still Impressive)

Strip away the marketing and what you have is genuinely useful: an agent that can call tools, not just having some LLM generate text. Through protocols like MCP and function calling, it can trigger API requests, read and write files, execute code, and chain together multi-step workflows. The LLM itself is still just generating text, but that text is structured as tool calls that external systems execute on its behalf.

That’s powerful. OpenClaw can manage your calendar and send emails from a Telegram message. CrewAI can coordinate specialized agents where one researches, another writes, and a third reviews. What used to take an afternoon can happen in minutes.

But the value comes from good system design, not from some mythical self-improvement loop.

The Bottom Line

Agentic AI is a meaningful step forward. LLMs that can call external tools and orchestrate multi-step processes open up real possibilities for automating complex work. That’s valuable on its own terms.

We don’t need to pretend they’re something they’re not. The reality is interesting enough.